BetaHub Blog

How to Handle Duplicate Bug Reports Without Losing Your Mind

April 22, 2026 · 10 min read

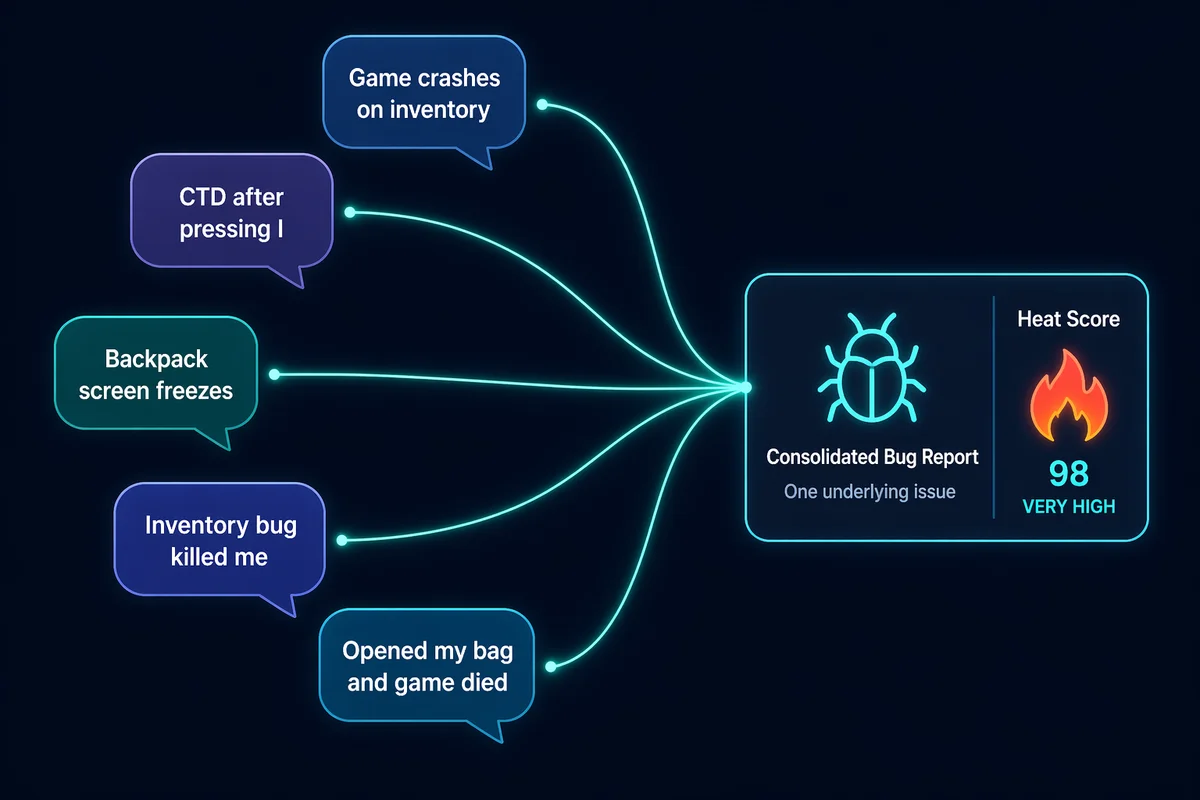

You know the feeling. You open your bug tracker Monday morning and see 47 new reports from the weekend. You start reading:

- “Game crashes when opening inventory”

- “CTD after pressing I key”

- “Inventory screen freezes then kicks me to desktop”

- “Backpack won’t open, game closes itself”

- “I was in the middle of a boss fight, opened my bag and the game died”

Five reports. One bug. And somewhere in the remaining 42 reports, three more players described the same crash in three more completely different ways. By the time you’ve read, compared, and triaged everything, it’s lunchtime and you haven’t written a single line of code.

Duplicate bug reports are the silent productivity killer of game development. On average, roughly 12% of player-submitted bug reports are duplicates — and in large, active communities, that number can hit 50%.

Why Players Report the Same Bug Differently

Before we talk solutions, it’s worth understanding why duplicates happen. It’s not because your players are lazy — it’s because they’re human.

Players describe what they experienced, not what went wrong technically. One player says “inventory crash.” Another says “the game froze when I pressed I.” A third says “I died to the boss because the game closed itself when I tried to use a potion.” Same root cause, three completely different descriptions.

Players don’t search before reporting. Even if you have a public bug board with a search bar, most players won’t use it. They just experienced something frustrating, they want to report it while it’s fresh, and they don’t want to wade through other people’s reports first. And honestly? That’s fine. You want a low barrier to reporting — the last thing you need is players who don’t report because the process is too involved.

Context changes everything. A crash in the inventory screen might have five different triggers. Player A crashes when opening the inventory during combat. Player B crashes when sorting items. Player C crashes only in multiplayer. These might be the same underlying bug or they might be three different bugs with similar symptoms. The only way to know is to read every report carefully.

Discord makes it worse. In a text channel, bug reports fly by in real-time. There’s no searchable database, no “related issues” sidebar, no way for Player #47 to know that Players #1 through #46 already reported the same thing. Every report arrives as if it’s the first one.

The Manual Approach (And Why It Breaks)

Most small teams start with manual deduplication. It works — until it doesn’t.

The spreadsheet stage. Someone on the team (usually the community manager) reads every report, mentally compares it to existing bugs, and either creates a new ticket or adds a note to an existing one. This works for 10-20 reports a day. Maybe 30 if they’re fast.

The “known issues” list. You pin a list of known bugs in your Discord channel and ask players to check it before reporting. Participation rate: approximately zero. Players skip pinned messages like they skip tutorials.

The template approach. You create a bug report template with required fields (steps to reproduce, platform, build version) hoping that structured data will be easier to compare. This helps with report quality but does nothing for deduplication — two perfectly structured reports about the same crash are still duplicates that need human review.

The breaking point. Every one of these approaches has a ceiling, and it’s usually around 50-100 reports per day. Beyond that, manual deduplication becomes a full-time job. You’re paying someone to read the same bug described 15 different ways instead of paying someone to fix it.

The math is brutal: if half your reports are duplicates and you spend 3 minutes per report on triage, that’s 75 minutes per day on duplicate management alone at 50 reports/day. At 200 reports/day — common during a launch or major update — that’s 5 hours of pure duplicate sorting.

What Actually Works: Semantic Deduplication

The key insight is that keyword matching doesn’t work for bug reports. Players don’t use consistent terminology. “Crash”, “freeze”, “CTD”, “game closed itself”, “kicked to desktop” — these all mean the same thing, but they share almost no words in common.

Semantic matching solves this by comparing the meaning of reports rather than the words. Instead of checking whether two reports contain the same keywords, it converts each report into a mathematical representation of its meaning and compares those representations. “Inventory crash” and “game freezes on backpack screen” are far apart in keyword space but very close in meaning space.

This is the approach BetaHub uses for its duplicate detection system. Here’s how it works in practice:

How BetaHub’s Duplicate Detection Works

When a new bug report comes in — whether from Discord, an in-game plugin, or a web form — BetaHub’s AI processes it through several layers:

-

Semantic analysis — The report is converted into a vector representation that captures its meaning, not just its words. “Game crashes when I open inventory” and “CTD after pressing I key” produce similar vectors despite sharing almost no words.

-

Confidence-based matching — The system only flags duplicates when it’s highly confident the reports describe the same issue. The algorithm has gone through multiple iterations to reduce false positives — it only auto-merges when confidence is high.

-

Automatic merging with media consolidation — When a duplicate is detected, the new report is linked to the original. All media (screenshots, videos, logs) from the duplicate is consolidated into the original report, giving developers more evidence to work with.

What Happens to the Reporter

This is where most dedup systems get it wrong. Traditional approaches treat duplicates as waste — something to delete or hide. BetaHub treats them as signals.

When a player submits a report that’s identified as a duplicate:

- Their report is linked to the original issue, not deleted

- They can watch the original issue to track progress

- Their contribution increases the issue’s Heat score — a metric that combines duplicate count, watchers, recency, and priority to surface the most impactful bugs

- They’re never shamed for “submitting a duplicate.” The message is clear: every report matters because it tells us more players are experiencing this problem

The result: an issue reported by 15 different players doesn’t create 15 separate tickets cluttering your backlog. It creates one well-documented issue with a high Heat score that rises to the top of your priority list — which is exactly what should happen with a bug affecting that many players.

Manual Override

The AI handles the heavy lifting, but developers stay in control:

- You can manually mark reports as duplicates if the system misses one

- You can unmerge incorrectly merged reports

- A “Similar Bugs” card shows potentially related reports that weren’t confident enough to auto-merge, so you can decide

- If a bug matches a previously closed issue, it’s flagged as a possible regression rather than merged as a duplicate

Once issues are deduplicated and prioritized, you can push them to your existing dev tools — Jira, GitHub, Linear, and 7 others — so your deduplicated, evidence-rich issues feed directly into your development workflow.

The Before and After

Here’s what the shift from manual to automated deduplication actually looks like:

Before: 200 reports come in over the weekend. Your community manager spends Monday morning reading all of them, mentally comparing each to existing bugs, creating tickets for the new ones, and adding “me too” notes to existing ones. By noon, they’ve processed maybe half. Critical bugs from Saturday are still waiting in the queue.

After: 200 reports come in over the weekend. BetaHub processes them in real-time. Monday morning, your dashboard shows 45 unique issues, sorted by Heat. The top issue has 28 duplicates — it’s clearly the most widespread problem. The third issue has video evidence from 6 different players showing the exact conditions that trigger it. You know exactly what to fix first, and you have all the evidence you need to reproduce it.

This is roughly what happened with MADFINGER Games when Gray Zone Warfare launched to 72,000+ concurrent players. Over 40,000 feedback submissions poured in on day one. Hundreds of duplicate reports were automatically merged, immediately revealing that the most widespread issue was a “headless character” rendering bug — something that would have taken days to surface through manual triage.

A Different Way to Think About Duplicates

The most important shift isn’t technical — it’s philosophical.

Duplicates aren’t noise. They’re a voting system. Every time a player reports an issue that’s already been reported, they’re casting a vote for “this matters.” A bug with 30 duplicates isn’t a bug that wasted your time 30 times — it’s a bug that 30 players cared enough to tell you about. That’s valuable signal.

When you treat duplicates as signal rather than noise:

- Prioritization becomes democratic. The bugs that affect the most players naturally rise to the top, not just the bugs that your most vocal community member complains about.

- Players feel heard. Nobody likes reporting a bug and hearing nothing back. When duplicates are acknowledged and credited, players know their report mattered even if someone else reported it first.

- Your community keeps reporting. The moment players feel like reporting is pointless — because they get ignored, or told “already reported”, or discouraged from submitting — you lose your best free QA team.

Getting Started

If you’re currently drowning in duplicate reports, here’s a practical path forward:

If you have fewer than 50 reports/day: Manual dedup is manageable but tedious. Consider a simple tagging system in your existing tracker to group related reports. This won’t scale, but it’ll keep you organized for now.

If you have 50-200 reports/day: You’re at the breaking point where manual approaches consume more time than they save. This is where automated dedup tools pay for themselves. BetaHub’s free plan handles 1,000 bug reports per 30 days with full AI processing and duplicate detection — enough to test whether automated dedup actually helps before committing to a paid plan.

If you have 200+ reports/day: You need automated dedup yesterday. At this volume, the choice isn’t “should we automate?” — it’s “how many hours per week are we wasting on manual triage?” The answer is usually more than anyone realizes.

FAQ

Can BetaHub catch duplicates across different reporting channels?

Yes. Whether a report comes from Discord, an in-game plugin (Unity, Unreal), the Roblox widget, or a web form, all reports feed into the same system. A Discord report and an in-game report about the same bug will be matched and merged.

What if the AI incorrectly merges two different bugs?

You can unmerge them with one click. The system errs on the side of caution — it only auto-merges when confidence is high. Borderline cases appear in the “Similar Bugs” card for manual review.

Does duplicate detection work for feature suggestions too, or just bugs?

It works for both bug reports and feature suggestions. Duplicate feature requests are merged the same way, with the Heat score reflecting how many players asked for the same thing.

How is this different from just searching for keywords?

Keyword search requires players to use the same words. Semantic matching compares meaning. “The game crashes when I open my bag” and “CTD on inventory screen” share zero keywords but have nearly identical meaning. Keyword search would miss this; semantic matching catches it.

Join for free today

Supercharge your team with the best bug tracking and player feedback tools. No credit card required, forever free.

Our Mission

At BetaHub, we empower game developers and communities with an engaging platform for bug submission. We foster collaboration, enhance gaming experiences, and speed up development. BetaHub connects developers, testers, and players, making everyone feel valued. Shape the future of gaming with us, one bug report at a time.

2026 © Upsoft sp. z o.o.